- Release Notes

- Introduction to CelerData Cloud Serverless

- Quick Start

- Sign up for CelerData Cloud Serverless

- A quick tour of the console

- Connect to CelerData Cloud Serverless

- Create an IAM integration

- Create and assign a warehouse

- Create an external catalog

- Load data from cloud storage

- Load data from Apache Kafka/Confluent Cloud

- Try your first query

- Invite new users

- Design data access control policy

- Warehouses

- Catalog, database, table, view, and MV

- Overview of database objects

- Catalog

- Table types

- Asynchronous materialized views

- Data Loading

- Data access control

- Networking and private connectivity

- Usage and Billing

- Organization and Account

- Integration

- Query Acceleration

- Reference

- AWS IAM policies

- Information Schema

- Overview

- be_bvars

- be_cloud_native_compactions

- be_compactions

- character_sets

- collations

- column_privileges

- columns

- engines

- events

- global_variables

- key_column_usage

- load_tracking_logs

- loads

- materialized_views

- partitions

- pipe_files

- pipes

- referential_constraints

- routines

- schema_privileges

- schemata

- session_variables

- statistics

- table_constraints

- table_privileges

- tables

- tables_config

- task_runs

- tasks

- triggers

- user_privileges

- views

- Data Types

- System Metadatabase

- Keywords

- SQL Statements

- Account Management

- Data Definition

- CREATE TABLE

- ALTER TABLE

- DROP CATALOG

- CREATE TABLE LIKE

- REFRESH EXTERNAL TABLE

- RESTORE

- SET CATALOG

- DROP TABLE

- RECOVER

- USE

- CREATE MATERIALIZED VIEW

- DROP DATABASE

- ALTER MATERIALIZED VIEW

- DROP REPOSITORY

- CANCEL RESTORE

- DROP INDEX

- DROP MATERIALIZED VIEW

- CREATE DATABASE

- CREATE TABLE AS SELECT

- BACKUP

- CANCEL BACKUP

- CREATE REPOSITORY

- CREATE INDEX

- Data Manipulation

- INSERT

- SHOW CREATE DATABASE

- SHOW BACKUP

- SHOW ALTER MATERIALIZED VIEW

- SHOW CATALOGS

- SHOW CREATE MATERIALIZED VIEW

- SELECT

- SHOW ALTER

- SHOW MATERIALIZED VIEW

- RESUME ROUTINE LOAD

- ALTER ROUTINE LOAD

- SHOW TABLES

- STREAM LOAD

- SHOW PARTITIONS

- CANCEL REFRESH MATERIALIZED VIEW

- SHOW CREATE CATALOG

- SHOW ROUTINE LOAD TASK

- SHOW RESTORE

- CREATE ROUTINE LOAD

- STOP ROUTINE LOAD

- SHOW DATABASES

- BROKER LOAD

- SHOW ROUTINE LOAD

- PAUSE ROUTINE LOAD

- SHOW SNAPSHOT

- SHOW CREATE TABLE

- CANCEL LOAD

- REFRESH MATERIALIZED VIEW

- SHOW REPOSITORIES

- SHOW LOAD

- Administration

- DESCRIBE

- SQL Functions

- Function List

- String Functions

- CONCAT

- HEX

- LOWER

- SPLIT

- LPAD

- SUBSTRING

- PARSE_URL

- INSTR

- REPEAT

- LCASE

- REPLACE

- HEX_DECODE_BINARY

- RPAD

- SPLIT_PART

- STRCMP

- SPACE

- CHARACTER_LENGTH

- URL_ENCODE

- APPEND_TAILING_CHAR_IF_ABSENT

- LTRIM

- HEX_DECODE_STRING

- URL_DECODE

- LEFT

- STARTS_WITH

- CONCAT

- GROUP_CONCAT

- STR_TO_MAP

- STRLEFT

- STRRIGHT

- MONEY_FORMAT

- RIGHT

- SUBSTRING_INDEX

- UCASE

- TRIM

- FIND_IN_SET

- RTRIM

- ASCII

- UPPER

- REVERSE

- LENGTH

- UNHEX

- ENDS_WITH

- CHAR_LENGTH

- NULL_OR_EMPTY

- LOCATE

- CHAR

- Predicate Functions

- Map Functions

- Binary Functions

- Geospatial Functions

- Lambda Expression

- Utility Functions

- Bitmap Functions

- BITMAP_SUBSET_LIMIT

- TO_BITMAP

- BITMAP_AGG

- BITMAP_FROM_STRING

- BITMAP_OR

- BITMAP_REMOVE

- BITMAP_AND

- BITMAP_TO_BASE64

- BITMAP_MIN

- BITMAP_CONTAINS

- SUB_BITMAP

- BITMAP_UNION

- BITMAP_COUNT

- BITMAP_UNION_INT

- BITMAP_XOR

- BITMAP_UNION_COUNT

- BITMAP_HAS_ANY

- BITMAP_INTERSECT

- BITMAP_AND_NOT

- BITMAP_TO_STRING

- BITMAP_HASH

- INTERSECT_COUNT

- BITMAP_EMPTY

- BITMAP_MAX

- BASE64_TO_ARRAY

- BITMAP_TO_ARRAY

- Struct Functions

- Aggregate Functions

- RETENTION

- MI

- MULTI_DISTINCT_SUM

- WINDOW_FUNNEL

- STDDEV_SAMP

- GROUPING_ID

- HLL_HASH

- AVG

- HLL_UNION_AGG

- COUNT

- BITMAP

- HLL_EMPTY

- SUM

- MAX_BY

- PERCENTILE_CONT

- COVAR_POP

- PERCENTILE_APPROX

- HLL_RAW_AGG

- STDDEV

- CORR

- COVAR_SAMP

- MIN_BY

- MAX

- VAR_SAMP

- STD

- HLL_UNION

- APPROX_COUNT_DISTINCT

- MULTI_DISTINCT_COUNT

- VARIANCE

- ANY_VALUE

- COUNT_IF

- GROUPING

- PERCENTILE_DISC

- Array Functions

- ARRAY_CUM_SUM

- ARRAY_MAX

- ARRAY_LENGTH

- ARRAY_REMOVE

- UNNEST

- ARRAY_SLICE

- ALL_MATCH

- ARRAY_CONCAT

- ARRAY_SORT

- ARRAY_POSITION

- ARRAY_DIFFERENCE

- ARRAY_CONTAINS

- ARRAY_JOIN

- ARRAY_INTERSECT

- CARDINALITY

- ARRAY_CONTAINS_ALL

- ARRAYS_OVERLAP

- ARRAY_MIN

- ARRAY_MAP

- ELEMENT_AT

- ARRAY_APPEND

- ARRAY_SORTBY

- ARRAY_TO_BITMAP

- ARRAY_GENERATE

- ARRAY_AVG

- ARRAY_FILTER

- ANY_MATCH

- REVERSE

- ARRAY_AGG

- ARRAY_DISTINCT

- ARRAY_SUM

- Condition Functions

- Math Functions

- Date and Time Functions

- DAYNAME

- MINUTE

- FROM_UNIXTIME

- HOUR

- MONTHNAME

- MONTHS_ADD

- ADD_MONTHS

- DATE_SUB

- PREVIOUS_DAY

- TO_TERA_DATA

- MINUTES_SUB

- WEEKS_ADD

- HOURS_DIFF

- UNIX_TIMESTAMP

- DAY

- DATE_SLICE

- DATE

- CURTIME

- SECONDS_SUB

- MONTH

- WEEK

- TO_DATE

- TIMEDIFF

- MONTHS_DIFF

- STR_TO_JODATIME

- WEEK_ISO

- MICROSECONDS_SUB

- TIME_SLICE

- MAKEDATE

- DATE_TRUNC

- JODATIME

- DAYOFWEEK

- YEARS_SUB

- TIMESTAMP_ADD

- HOURS_SUB

- STR2DATE

- TIMESTAMP

- FROM_DAYS

- WEEK_OF_YEAR

- YEAR

- TIMESTAMP_DIFF

- TO_TERA_TIMESTAMP

- DAYOFMONTH

- DAYOFYEAR

- DATE_FORMAT

- MONTHS_SUB

- NEXT_DAY

- MINUTES_DIFF

- DATA_ADD

- MINUTES_ADD

- CURDATE

- DAY_OF_WEEK_ISO

- CURRENt_TIMESTAMP

- STR_TO_DATE

- LAST_DAY

- WEEKS_SUB

- TO_DAYS

- DATEDIFF

- NOW

- TO_ISO8601

- TIME_TO_SEC

- QUARTER

- SECONDS_DIFF

- UTC_TIMESTAMP

- DATA_DIFF

- SECONDS_ADD

- ADDDATE

- WEEKSDIFF

- CONVERT_TZ

- MICROSECONDS_ADD

- SECOND

- YEARS_DIFF

- YEARS_ADD

- HOURS_ADD

- DAYS_SUB

- DAYS_DIFF

- Cryptographic Functions

- Percentile Functions

- Bit Functions

- JSON Functions

- Hash Functions

- Scalar Functions

- Table Functions

Delta Lake catalog

A Delta Lake catalog is an external catalog that enables you to query data from Delta Lake without ingestion into CelerData Cloud Serverless. To ensure successful SQL workloads on your Delta Lake cluster, CelerData must be able to access the storage system and metastore of your Delta Lake cluster. CelerData supports the following storage systems and metastores:

Object storage like AWS S3

Metastore like Hive metastore or AWS Glue

Usage notes

- The file format of Delta Lake that CelerData supports is Parquet. Parquet files support the following compression formats: SNAPPY, LZ4, ZSTD, GZIP, and NO_COMPRESSION.

- The data types of Delta Lake that CelerData does not support are MAP and STRUCT.

Create a Delta Lake catalog

Syntax

CREATE EXTERNAL CATALOG <catalog_name>

[COMMENT <comment>]

PROPERTIES

(

"type" = "deltalake",

MetastoreParams,

StorageCredentialParams,

MetadataUpdateParams

)Parameters

catalog_name

The name of the Delta Lake catalog. The naming conventions are as follows:

- The name can contain letters, digits (0-9), and underscores (_). It must start with a letter.

- The name is case-sensitive and cannot exceed 1023 characters in length.

comment

The description of the Delta Lake catalog. This parameter is optional.

type

The type of your data source. Set the value to deltalake.

MetastoreParams

A set of parameters about how CelerData integrates with the metastore of your data source.

Hive metastore

If you choose Hive metastore as the metastore of your data source, check that CelerData can access the host of your Hive metastore.

NOTE

In normal cases, you can take one of the following actions to enable integration between CelerData and your Hive metastore:

- Deploy CelerData and your Hive metastore on the same VPC.

- Configure a VPC peering connection between the VPC hosting CelerData and the VPC hosting your Hive metastore.

Then, check the configurations of the security group of your Hive metastore to ensure that its inbound rules allow inbound traffic from CelerData's security group and that its port range covers the default port 9083.

"hive.metastore.type" = "hive",

"hive.metastore.uris" = "<hive_metastore_uri>"The following table describes the parameter you need to configure in MetastoreParams.

| Parameter | Required | Description |

|---|---|---|

| hive.metastore.type | Yes | The type of metastore that you use for your Delta Lake cluster. Set the value to hive. |

| hive.metastore.uris | Yes | The URI of your Hive metastore. Format: thrift://<metastore_IP_address>:<metastore_port>.If high availability (HA) is enabled for your Hive metastore, you can specify multiple metastore URIs and separate them with commas (,), for example, "thrift://<metastore_IP_address_1>:<metastore_port_1>","thrift://<metastore_IP_address_2>:<metastore_port_2>","thrift://<metastore_IP_address_3>:<metastore_port_3>". |

AWS Glue

If you choose AWS Glue as the metastore of your data source, which is supported only when you choose AWS S3 as storage, take one of the following actions:

To choose the instance profile-based authentication method, configure

MetastoreParamsas follows:"hive.metastore.type" = "glue", "aws.glue.use_instance_profile" = "true", "aws.glue.region" = "<aws_glue_region>"To choose the assumed role-based authentication method, configure

MetastoreParamsas follows:"hive.metastore.type" = "glue", "aws.glue.use_instance_profile" = "true", "aws.glue.iam_role_arn" = "<iam_role_arn>", "aws.glue.region" = "<aws_glue_region>"To choose the IAM user-based authentication method, configure

MetastoreParamsas follows:"hive.metastore.type" = "glue", "aws.glue.use_instance_profile" = "false", "aws.glue.access_key" = "<iam_user_access_key>", "aws.glue.secret_key" = "<iam_user_secret_key>", "aws.glue.region" = "<aws_s3_region>"

The following table describes the parameters you need to configure in MetastoreParams.

| Parameter | Required | Description |

|---|---|---|

| hive.metastore.type | Yes | The type of metastore that you use for your Hive cluster. Set the value to glue. |

| aws.glue.use_instance_profile | Yes | Specifies whether to enable the instance profile-based authentication method and the assumed role-based authentication. Valid values: true and false. Default value: false. |

| aws.glue.iam_role_arn | No | The ARN of the IAM role that has privileges on your AWS Glue Data Catalog. If you use the assumed role-based authentication method to access AWS Glue, you must specify this parameter. |

| aws.glue.region | Yes | The region in which your AWS Glue Data Catalog resides. Example: us-west-1. |

| aws.glue.access_key | No | The access key of your AWS IAM user. If you use the IAM user-based authentication method to access AWS Glue, you must specify this parameter. |

| aws.glue.secret_key | No | The secret key of your AWS IAM user. If you use the IAM user-based authentication method to access AWS Glue, you must specify this parameter. |

For information about how to choose an authentication method for accessing AWS Glue and how to configure an access control policy in the AWS IAM Console, see Authentication parameters for accessing AWS Glue.

StorageCredentialParams

A set of parameters about how CelerData integrates with your object storage.

AWS S3

If you choose AWS S3 as storage for your Hive cluster, take one of the following actions:

To choose the instance profile-based authentication method, configure

StorageCredentialParamsas follows:"aws.s3.use_instance_profile" = "true", "aws.s3.region" = "<aws_s3_region>"To choose the assumed role-based authentication method, configure

StorageCredentialParamsas follows:"aws.s3.use_instance_profile" = "true", "aws.s3.iam_role_arn" = "<iam_role_arn>", "aws.s3.region" = "<aws_s3_region>"To choose the IAM user-based authentication method, configure

StorageCredentialParamsas follows:"aws.s3.use_instance_profile" = "false", "aws.s3.access_key" = "<iam_user_access_key>", "aws.s3.secret_key" = "<iam_user_secret_key>", "aws.s3.region" = "<aws_s3_region>"

The following table describes the parameters you need to configure in StorageCredentialParams.

| Parameter | Required | Description |

|---|---|---|

| aws.s3.use_instance_profile | Yes | Specifies whether to enable the instance profile-based authentication method and the assumed role-based authentication method. Valid values: true and false. Default value: false. |

| aws.s3.iam_role_arn | No | The ARN of the IAM role that has privileges on your AWS S3 bucket. If you use the assumed role-based authentication method to access AWS S3, you must specify this parameter. |

| aws.s3.region | Yes | The region in which your AWS S3 bucket resides. Example: us-west-1. |

| aws.s3.access_key | No | The access key of your IAM user. If you use the IAM user-based authentication method to access AWS S3, you must specify this parameter. |

| aws.s3.secret_key | No | The secret key of your IAM user. If you use the IAM user-based authentication method to access AWS S3, you must specify this parameter. |

For information about how to choose an authentication method for accessing AWS S3 and how to configure an access control policy in AWS IAM Console, see Authentication parameters for accessing AWS S3.

MetadataUpdateParams

A set of parameters about how CelerData updates the cached metadata of Delta Lake. This parameter set is optional.

CelerData implements the automatic asynchronous update policy by default.

In most cases, you can ignore MetadataUpdateParams and do not need to tune the policy parameters in it, because the default values of these parameters already provide you with an out-of-the-box performance.

However, if the frequency of data updates in Delta Lake is high, you can tune these parameters to further optimize the performance of automatic asynchronous updates.

NOTE

In most cases, if your Delta Lake data is updated at a granularity of 1 hour or less, the data update frequency is considered high.

| Parameter | Required | Description |

|---|---|---|

| enable_metastore_cache | No | Specifies whether CelerData caches the metadata of Delta Lake tables. Valid values: true and false. Default value: true. The value true enables the cache, and the value false disables the cache. |

| enable_remote_file_cache | No | Specifies whether CelerData caches the metadata of the underlying data files of Delta Lake tables or partitions. Valid values: true and false. Default value: true. The value true enables the cache, and the value false disables the cache. |

| metastore_cache_refresh_interval_sec | No | The time interval at which CelerData asynchronously updates the metadata of Delta Lake tables or partitions cached in itself. Unit: seconds. Default value: 7200, which is 2 hours. |

| remote_file_cache_refresh_interval_sec | No | The time interval at which CelerData asynchronously updates the metadata of the underlying data files of Delta Lake tables or partitions cached in itself. Unit: seconds. Default value: 60. |

| metastore_cache_ttl_sec | No | The time interval at which CelerData automatically discards the metadata of Delta Lake tables or partitions cached in itself. Unit: seconds. Default value: 86400, which is 24 hours. |

| remote_file_cache_ttl_sec | No | The time interval at which CelerData automatically discards the metadata of the underlying data files of Delta Lake tables or partitions cached in itself. Unit: seconds. Default value: 129600, which is 36 hours. |

For more information, see the "Understand automatic asynchronous update" section of this topic.

View Delta Lake catalogs

You can use SHOW CATALOGS to query all catalogs in your CelerData cloud account:

SHOW CATALOGS;You can also use SHOW CREATE CATALOG to query the creation statement of an external catalog. The following example queries the creation statement of a Delta Lake catalog named deltalake_catalog_glue:

SHOW CREATE CATALOG deltalake_catalog_glue;Drop a Delta Lake catalog

You can use DROP CATALOG to drop an external catalog.

The following example drops a Delta Lake catalog named deltalake_catalog_glue:

DROP Catalog deltalake_catalog_glue;View the schema of a Delta Lake table

You can use one of the following syntaxes to view the schema of a Delta Lake table:

View schema

DESC[RIBE] <catalog_name>.<database_name>.<table_name>View schema and location from the CREATE statement

SHOW CREATE TABLE <catalog_name>.<database_name>.<table_name>

Query a Delta Lake table

Use SHOW DATABASES to view the databases in your Delta Lake cluster.

SHOW DATABASES <catalog_name>;Use SET CATALOG to switch to the destination catalog in the current session:

SET CATALOG <catalog_name>;Then, use USE to specify the active database in the current session:

USE <db_name>;Or, you can use USE to directly specify the active database in the destination catalog:

USE <catalog_name>.<db_name>;Use SELECT to query the destination table in the specified database:

SELECT count(*) FROM <table_name> LIMIT 10

Examples

Suppose your Delta Lake cluster uses Hive metastore as metastore and AWS S3 as object storage and you use the instance profile-based authentication method to access your AWS S3 bucket located in the us-west-2 region. In this situation, you can run the following command to create a catalog named deltalake_catalog_hms to access your Delta Lake data:

CREATE EXTERNAL CATALOG deltalake_catalog_hms

PROPERTIES

(

"type" = "deltalake",

"hive.metastore.uris" = "thrift://xx.xx.xx.xx:9083",

"aws.s3.use_instance_profile" = "true",

"aws.s3.region" = "us-west-2"

);Suppose your Delta Lake cluster uses AWS Glue as metastore and AWS S3 as object storage and you use the assumed role-based authentication method to access your AWS S3 bucket located in the us-west-1 region. In this situation, you can run the following command to create a catalog named deltalake_catalog_glue to access your Delta Lake data:

CREATE EXTERNAL CATALOG deltalake_catalog_glue

PROPERTIES

(

"type" = "deltalake",

"hive.metastore.type" = "glue",

"aws.glue.use_instance_profile" = "true",

"aws.glue.iam_role_arn" = "arn:aws:iam::51234343412:role/role_name_in_aws_iam",

"aws.glue.region" = "us-west-1",

"aws.s3.use_instance_profile" = "true",

"aws.s3.iam_role_arn" = "arn:aws:iam::51234343412:role/role_name_in_aws_iam",

"aws.s3.region" = "us-west-1"

);Synchronize metadata updates

By default, CelerData caches the metadata of Delta Lake and automatically updates the metadata in asynchronous mode to deliver better performance. Additionally, after some schema changes or table updates are made on a Delta Lake table, you can also use REFRESH EXTERNAL TABLE to update its metadata, thereby ensuring that CelerData can obtain up-to-date metadata at its earliest opportunity and generate appropriate execution plans:

REFRESH EXTERNAL TABLE <table_name>Appendix: Understand automatic asynchronous update

Automatic asynchronous update is the default policy that CelerData uses to update the metadata in Delta Lake catalogs.

By default (namely, when the enable_metastore_cache and enable_remote_file_cache parameters are both set to true), if a query hits a partition of a Delta Lake table, CelerData automatically caches the metadata of the partition and the metadata of the underlying data files of the partition. The cached metadata is updated by using the lazy update policy.

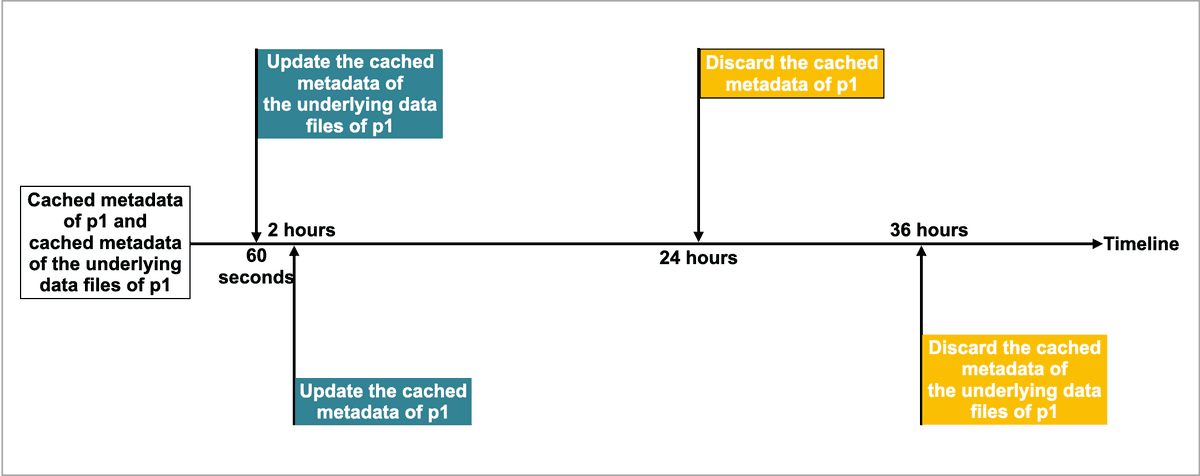

For example, there is a Delta Lake table named table2, which has four partitions: p1, p2, p3, and p4. A query hits p1, and CelerData caches the metadata of p1 and the metadata of the underlying data files of p1. Assume that the default time intervals to update and discard the cached metadata are as follows:

- The time interval (specified by the

metastore_cache_refresh_interval_secparameter) to asynchronously update the cached metadata ofp1is 2 hours. - The time interval (specified by the

remote_file_cache_refresh_interval_secparameter) to asynchronously update the cached metadata of the underlying data files ofp1is 60 seconds. - The time interval (specified by the

metastore_cache_ttl_secparameter) to automatically discard the cached metadata ofp1is 24 hours. - The time interval (specified by the

remote_file_cache_ttl_secparameter) to automatically discard the cached metadata of the underlying data files ofp1is 36 hours.

The following figure shows the time intervals on a timeline for easier understanding.

Then CelerData updates or discards the metadata in compliance with the following rules:

- If another query hits

p1again and the current time from the last update is less than 60 seconds, CelerData does not update the cached metadata ofp1or the cached metadata of the underlying data files ofp1. - If another query hits

p1again and the current time from the last update is more than 60 seconds, CelerData updates the cached metadata of the underlying data files ofp1. - If another query hits

p1again and the current time from the last update is more than 2 hours, CelerData updates the cached metadata ofp1. - If

p1has not been accessed within 24 hours from the last update, CelerData discards the cached metadata ofp1. The metadata will be cached at the next query. - If

p1has not been accessed within 36 hours from the last update, CelerData discards the cached metadata of the underlying data files ofp1. The metadata will be cached at the next query.